vpp-ipfix

IPFIX flow export enriched with application metadata — app name, category, TLS SNI, JA3 fingerprint.

vpp-ipfix exports IPFIX (RFC 7011) flow records directly from VPP’s data plane, enriched with nDPI classification metadata. Records carry the full 5-tuple plus application name, category, risk flags, TLS SNI, and JA3 fingerprint — sent to any standard IPFIX/NetFlow collector.

Status

Available. Compiled into ndpi_plugin.so. Apache 2.0.

Configuration

Enable in startup.conf alongside the ndpi plugin:

plugins {

plugin default { disable }

plugin ndpi_plugin.so { enable }

}

ndpi {

flows-per-worker 65536

tcp-idle-timeout 60

udp-idle-timeout 30

}

CLI reference

| Command | Description |

|---|---|

set ndpi-ipfix exporter collector <IP> port <port> | Add an IPFIX collector |

set ndpi-ipfix enable | Start exporting flows |

set ndpi-ipfix disable | Pause export (collector stays configured) |

clear ndpi-ipfix exporter | Remove all collectors |

show ndpi-ipfix exporter | Show configured collectors |

show ndpi-ipfix stats | Export counters |

clear ndpi-ipfix stats | Reset counters |

Example output

vppctl set ndpi-ipfix exporter collector 192.168.1.100 port 2055

vppctl set ndpi-ipfix enable

vppctl show ndpi-ipfix stats

IPFIX export: enabled

Collector 0: 192.168.1.100:2055 (fd=12)

flows exported: 924

PDUs sent: 155

ring overflow drops: 0

UDP send errors: 0

templates sent: 1

Custom Information Elements

Records use IANA standard IEs for the 5-tuple, counters, and timestamps, plus ntop enterprise IEs (PEN 35632) for application metadata:

| IE Name | IE ID (PEN 35632) | Type | Size | Description |

|---|---|---|---|---|

ndpiApplicationId | 1 | uint16 | 2 B | nDPI application protocol ID |

ndpiApplicationName | 2 | string | 32 B | Human-readable name (e.g. “YouTube”) |

ndpiCategory | 3 | uint8 | 1 B | Category (Streaming, VoIP, P2P…) |

ndpiRisk | 4 | uint32 | 4 B | Risk bitmask |

tlsSni | 5 | string | 64 B | TLS Server Name Indication |

ja3Hash | 6 | string | 33 B | JA3 client fingerprint |

Collector setup: nProbe + ntopng

The lab ships a ready-made Docker Compose configuration with nProbe as the IPFIX collector and ntopng as the flow browser. nProbe receives IPFIX on UDP/2055 and forwards flows to ntopng over ZMQ:

services:

nprobe:

image: ntop/nprobe:latest

command: ["-i", "none", "-3", "2055", "--ntopng", "tcp://*:5556",

"--dont-drop-privileges"]

ports:

- "2055:2055/udp"

ntopng:

image: ntop/ntopng:latest

entrypoint: >

bash -c "

/etc/init.d/redis-server start

ntopng -i 'tcp://nprobe:5556' --community -w 3001 --disable-login 1 &

exec tail -f /dev/null

"

ports:

- "3001:3001"

depends_on:

- nprobe

Start with docker compose up -d, then point VPP at nProbe:

vppctl set ndpi-ipfix exporter collector $(getent hosts nprobe | awk '{print $1}') port 2055

vppctl set ndpi-ipfix enable

Lab screenshots

The following screenshots are taken from the FlowLens software lab: VPP with nDPI → IPFIX → nProbe → ntopng.

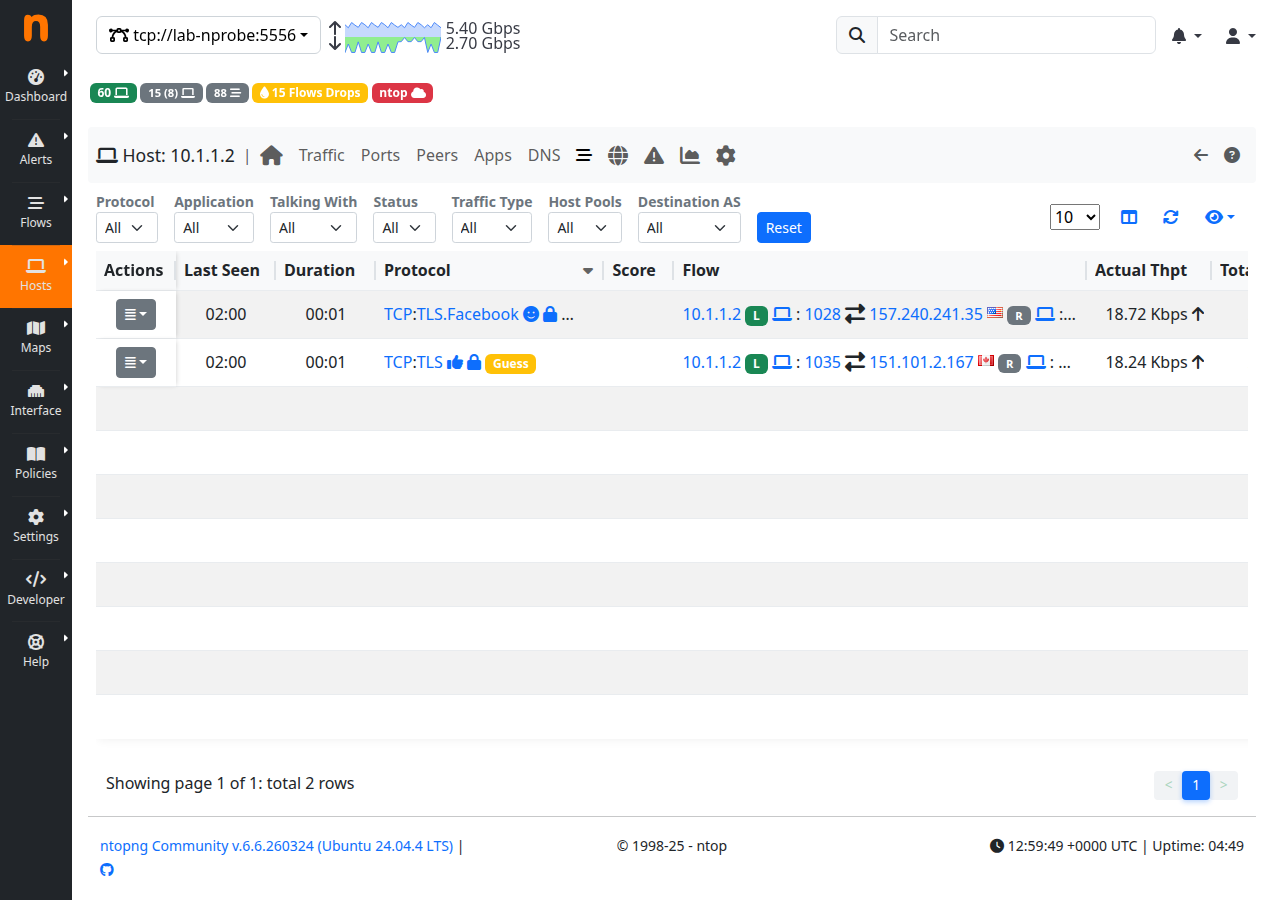

Live flow browser — TCP/TLS flows from VPP’s packet generator with correct 5-tuple data.

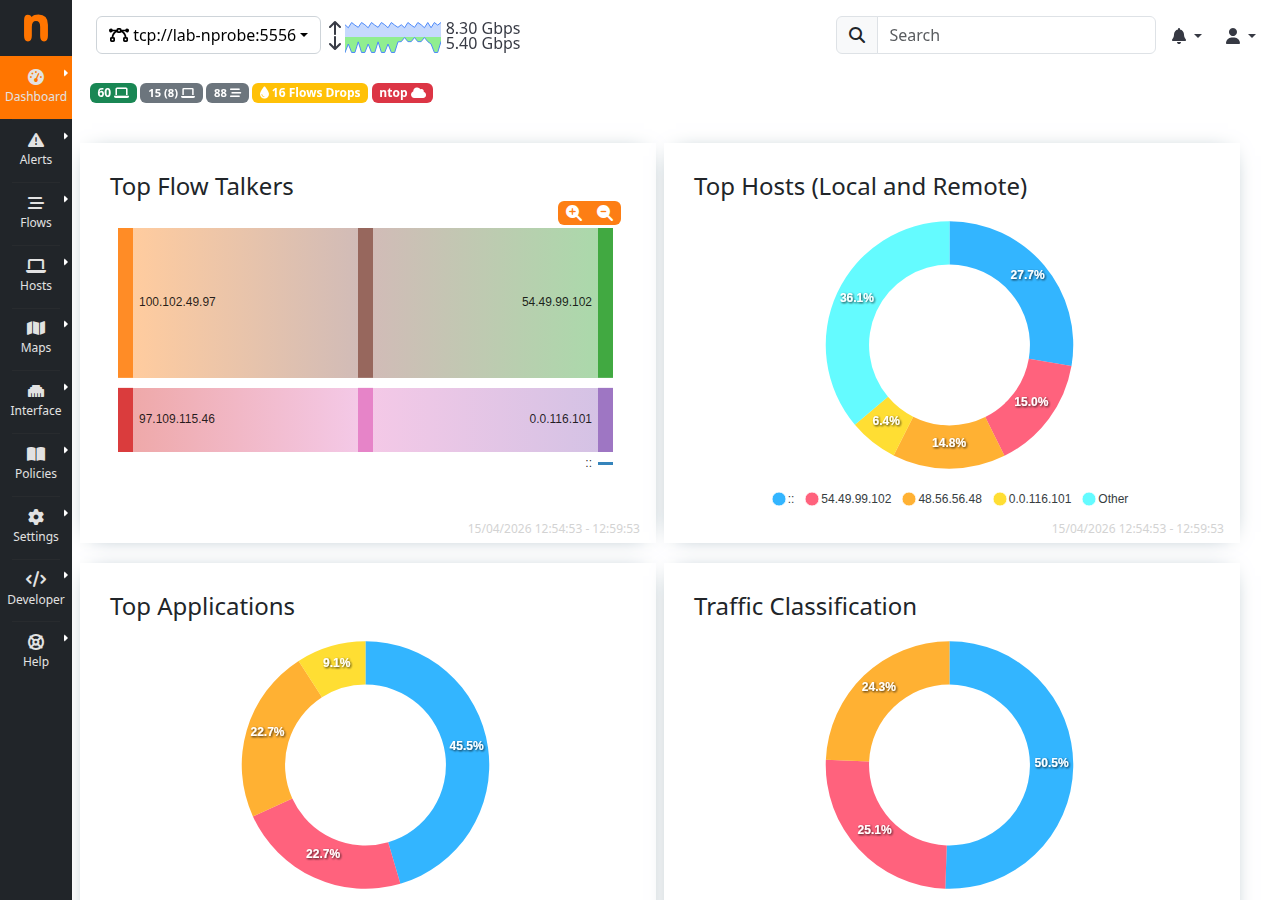

ntopng dashboard — Top Flow Talkers, Top Hosts, and Traffic Classification derived from the IPFIX stream.

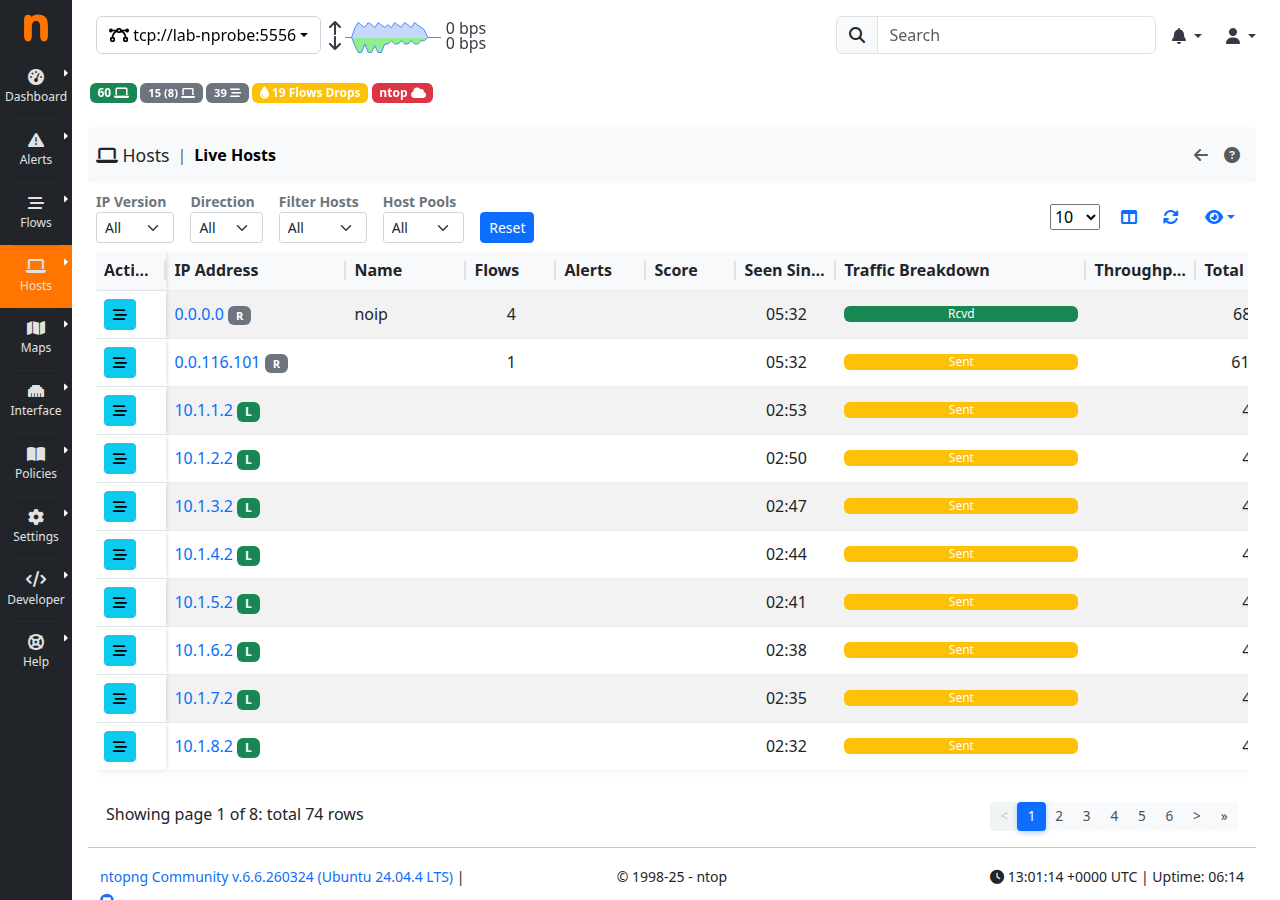

Per-host breakdown — individual host statistics aggregated from the IPFIX flow records.

Collector compatibility

Any RFC 7011 compliant IPFIX collector can consume the export. Standard fields (5-tuple, byte/packet counts, timestamps) are universally supported. Application metadata is carried in ntop enterprise IEs (PEN 35632) — collectors that support these IEs (nProbe/ntopng, ElasticFlow) will show application names and categories directly; others receive the standard fields and may apply their own classification.

Source

src/plugins/ipfix/ — available via PacketFlow commercial engagement.